LLM-Friendly PDF Parsing

Working with pdf files has evolved over the last few years. The new LLM-based workflows have made it easier than ever to parse tables from PDF files without compromising on the accuracy of the data points. I had to parse the tables from this report on Population Projections published by the National Commission On Population, Ministry Of Health & Family Welfare. There are 21 different table types here, but the actual number of tables is much higher — most types have separate state-level variants. Some tables are divided in two parts on the same page. A year ago, I would have used a UI based tool like Tabula or a python library like Camelot to extract tables from this PDF.

But now what is left of this task (for most use-cases) is to feed this pdf report into any LLM and write a good prompt. One issue I see with this approach is the over-consumption of LLM tokens. Such tasks consume tokens rapidly. A task like this can exhaust the tokens available for a Claude Code pro session.

This is a good use-case for Docling. An open-source document processing library designed to turn messy, real-world documents into clean, structured data. Docling is powered by DocLayNet: A Large Human-Annotated Dataset for Document-Layout Analysis and TableFormer - A family of transformer-based models specialized for accurate table structure recovery and table-text reasoning.

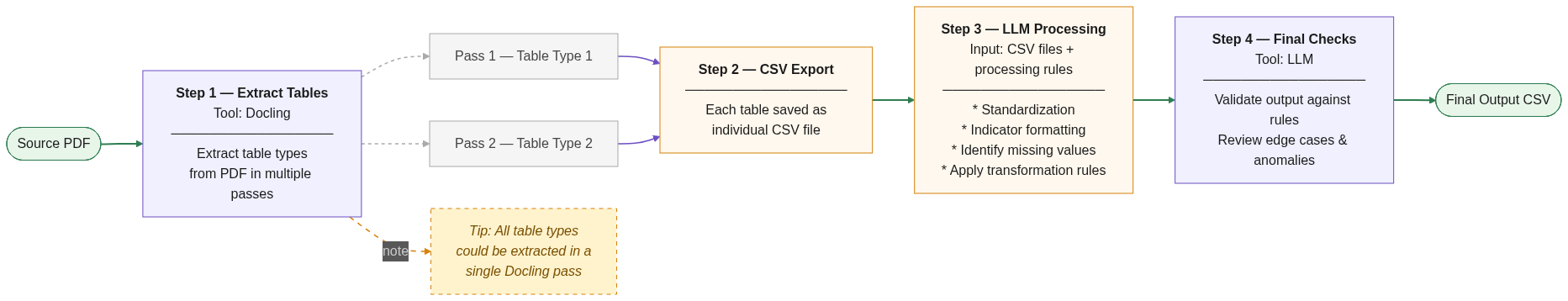

So instead of attaching the PDF directly to an LLM, I first passed it to Docling, which runs locally. It offers a few customisations. The script included inputs related to specific page ranges, OCR mode (turned it off since this was not a scanned document), and other small fixes to ensure the tables and headings were correctly identified. Docling will first convert the document to a DoclingDocument and then extracts text in the specified format. By the end of this step, I had one csv per table. In the next few prompts, I was able to create a consolidated csv file in the desired format and conduct a few data validation checks.

The pdf processing pipeline looks like this:

I managed to complete this task within the prescribed session token limits for Claude Code Pro plan. A few other things that helped:

- Heavy usage of the

/clearcommand - A minimal CLAUDE.md file.

- Disabling MCP tools

This claude code doc has a few more tips to reduce token usage.